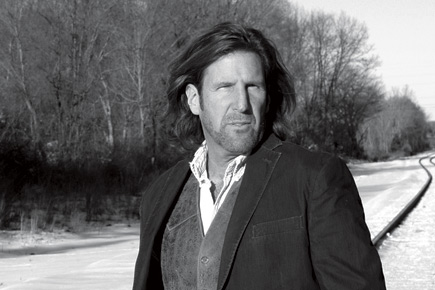

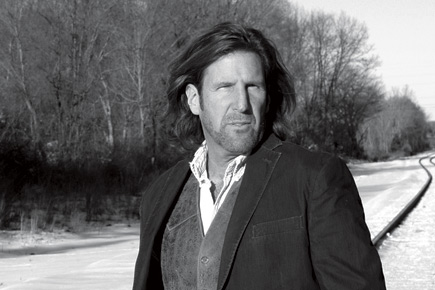

Raymond Bechard has been campaigning to keep the social networking site free of child pornography

A fervent supporter of causes for children and women, it wasn’t long before I stumbled upon the online campaign ‘Force Facebook to Block all Child Pornography’ by Men against Prostitution and Trafficking (Menapat). The social networking site that has become such an indispensable part of our lives had apparently turned into a haven for child exploiters.

According to Raymond Bechard, executive director of Menapat, one of the first to take note of this phenomenon, Facebook pages devoted to child pornography and paedophile activities are used to exchange photographs, videos and posts. Before Bechard came into the picture in 2010 with his campaign to get Facebook to take action, child nudity was easy to come by. Now, says Bechard, the images are “just on the edge, but you still wouldn’t want your child to be that”.

A quick scan brings up fake profiles with photographs of children as young as six or seven years of age, skimpily clad and in sexual poses. A girl barely 12 years old, with poker straight brown hair, glamorous eye make-up, accentuated lips and a spaghetti strap top through which an electric blue bra strap is clearly visible. Another girl, maybe 11 years old, lifting her bikini top, her legs wide apart. One of the comments on this photograph reads, ‘Young hot sexy.’

The US-based Bechard was doing research for his book The Berlin Turnpike, on human trafficking in America, when he came across similar photographs of children on Facebook. His curiosity arose when the classified advertisement website Craigslist, headquartered in San Francisco, shut down its ‘Adult services’ section in 2010 after being accused of housing and promoting prostitution, apart from child and human trafficking. “I realised that these advertisements marketing sex services had to move somewhere else on the internet, which included websites like escorts.com, backpage.com. But I soon realised that a lot of it had, in fact, migrated to Facebook. You see, unlike Craigslist, Facebook allowed these traffickers to contact people themselves. They didn’t have to wait for someone to contact them,” says Bechard.

Bechard then created a false profile for himself on Facebook under the alias Heather Fey. “Heather,” he says,“was an attractive, fun and outgoing girl. She had several open galleries for people to see on her ‘profile’ in which there were images that were quite sexy. Her profile picture, too, was of the same nature. She used to update it frequently and men could contact her openly.” Within a week, Heather had about 600 odd friend requests; some of these profiles had pictures of children on them. Delving deeper, he found that these profiles had open galleries with images of children posing provocatively; some were nude and these were open for anyone to see on Facebook.

“So yeah, that’s pretty much when I realised that this is something that shouldn’t be here,” he says. “We knew that something was systemically wrong, so in addition to reporting it to Facebook, we reported it to the National Center for Missing and Exploited Children [in the US] and also to the FBI.”

In 2011, Bechard started Menapat to fight the cause. While tracking the pages, Bechard figured that paedophiles had an easy way of contacting one another. While certain profiles had children’s pictures, some referred to the book Lolita or contained words like ‘child’, pedo’, ‘kid’, ‘young’ or ‘little’. With the help of a false profile created by Bechard, the FBI was even able to arrest a Kentucky resident, Jerry Cannon, for disseminating child pornography through 13 different fake profiles on Facebook. He was, incidentally, also the pastor of a church.

The disturbing part, though, is that it is not easy to put a stop to such activity. Once a user reports a profile or a page, Facebook takes it down, but it can’t stop the people involved from stealthily opening new pages. Also, once a picture has been reported, it is taken down but the person or page displaying such a picture isn’t put under the scanner until reported separately. After Bechard’s campaign and several news reports on the phenomenon, Facebook did try to block reported images. A Facebook spokesperson stated: “We have zero tolerance for child pornography being uploaded onto Facebook and are extremely aggressive in preventing and removing child exploitative content. We scan every photo that is uploaded to the site using PhotoDNA to ensure that this illicit material can’t be distributed and we report all instances of exploitative content to the National Center for Missing and Exploited Children. We’ve built complex technical systems that either block the creation of this content, including in private groups, or flag it for quick review by our team of investigations professionals.”

PhotoDNA basically identifies the picture by reading its pixelations, unique for every image. “It is like a human fingerprint,” says Bechard. The drawback, he claims, is that it can only detect a picture if it is already in the system. “If you’re paedophile and know your photos are registered, it encourages you to create new images and that basically leads to more child porn. Another thing is that it can’t detect videos, and that is a major drawback in itself,” says Bechard. Nonetheless, the steps did have some effect. “Recent changes include images that aren’t as sexually overt. But even in these, the comments next to pictures are the silver bullet. The paedophiles’ intentions are mirrored in those,” says Bechard.

It’s easy to verify these claims. Within four days of creating a false profile myself, I found pages that use cartoon images like ‘Pedobear’, a meme centred round paedophilia. After ‘liking’ a few pages, Facebook’s system started suggesting more pages with similar content. It also flashed many profiles—some obviously fake— with children’s pictures on them under ‘People you may know’. Some pages had external links that re-routed one to websites rife with pornographic material. “It’s just everywhere. Absolutely global,” exclaims Bechard, “You know I feel that human nature goes wherever humans go. It cannot be defined by boundaries.”

Facebook insists it has been working with the National Center for Missing and Exploited Children and the New York State Attorney General’s Office in the US, as well as the Child Exploitation and Online Protection Centre in the UK, to use known databases of child exploitative material to heighten its detection and bring those responsible to justice. But Bechard says it is still not enough. “If you look at adult porn sites, you’ll notice that they keep their sites absolutely child porn free because they don’t want to get in trouble with authorities or invite any kind of legal action. So, if they can do it, why can’t Facebook?” he asks.

According to Bechard, Facebook has declined to work with Menapat directly. “Facebook refused to consider us and our plea. According to them, we aren’t from any law authority and neither have educational credibility, so they refused to listen to us,” he says.

And he doesn’t trust them enough to tackle this problem on their own. “Facebook constantly says ‘Tell us and we’ll tell the authorities’. It is basically asking us to report to them, not to authorities directly. But what happens of the proof once a page with such content is gone? Nobody knows. Throughout this time, since we’ve been in contact with them, Facebook officials say that they are constantly improving their security system, that ‘it’s not as bad as it looks’, ‘we’re working with law enforcements’, and basically toe the company line.”

While Facebook’s spokesperson insists that “we’ve created a much safer environment on Facebook than exists offline, where people can share this disgusting material in the privacy of their own homes without anyone watching”, Bechard continues to find and report any illicit content Menapat finds on Facebook, “and there’s still so much of it”. Twitter, he says, is “horrible in this respect” but Menapat is solely focusing on Facebook for now. “You know, it’s not something that will end in a matter of days or even years. Maybe someday we’ll wake up and it will all be gone,” he says.

The most important mission right now, though, is to locate a victim of child pornography. “We are trying to locate a victim so that s/he can sue Facebook for not taking down the picture,” he says. Menapat, he says, has been communicating through the media both on the issue of child pornography on Facebook and the need to find victims.

“We have not found a victim yet. The problem is that Facebook is relatively new, so victims posted there are still quite young. As such, they are still hidden from view and not of age to come forward as witnesses. If they have been rescued from the situation, then they are being highly protected—and justifiably so. We may have to wait years for legal action against Facebook to become a reality,” he says.

More Columns

Travellers on Trial Bhavya Dore

Sahir’s Legacy Kaveree Bamzai

The Devi Mystique Bibek Debroy