The Dark Side of the Boon

DURING THE 48-HOUR silence period before the first phase of the 2019 Lok Sabha elections, when no political party can advertise or campaign, I received quite a few political WhatsApp forwards. One was purportedly a text of a BBC survey predicting the Bharatiya Janata Party's victory, stating this to be good for Indian democracy. Another combined two images—the first showed the Congress leader Sachin Pilot climbing a ladder next to the billboard of Prime Minister Narendra Modi and the second showed him defiling Modi's face with black ink, with the accompanying text underscoring the spitefulness of Congress leaders. The third was a video, which showed 'BJP goons' thrashing a Muslim man. The first was a rehashed version of junk news that had been widely circulated before the Karnataka state elections in 2018. The second showed Modi's face clearly while Pilot's was hazy. The blackening of Modi's face was clearly done by an ink tool available in any of the free image-editing softwares. The third was debunked by the fact-checking site AltNews as gang-related violence. All these forwards were sent by highly educated urban professionals. To test whether the human chain works as effectively in countering misinformation, I replied to all three senders, stating these messages to be false, backing my claim with verifiable evidence and asking them to do the same with those from whom they had received the forwards, hoping they would, in a sense, pay it backward and dispel the rumours. They didn't. Few would like to be proven wrong and fewer would like to acknowledge that publicly. Disinformation (information spread by those who are aware of it being false) thrives because of misinformation (information spread by those who are not aware of it being false). Along with propaganda, disinformation deep-strikes into the heart of a democracy, distorting perspectives through flawed narratives. These time-tested weapons of information warfare have become deadlier due to the affordances of social media that have led to the lumpenisation of the literates.

This is the under-researched aspect of state-orchestrated disinformation campaigns—the role of citizens as the active carriers of dangerous online content that polarise the populace and trigger offline violence. Countries like India, Sri Lanka, Myanmar, Turkey and Germany have suffered an increase in hate crimes fuelled by social media-driven disinformation campaigns. In 2018, the Oxford Internet Institute came out with a report on computational propaganda (the use of automation, algorithms and big-data analytics to manipulate public life) which found India to be among the major countries where social media was used for mass opinion manipulation by at least one political party/agency. Most political parties engage in some form of computational propaganda. Those with more money and manpower can control the content and flow of information. While stories of political parties bankrolling the sunrise sector of disinformation abound and the government mulls over regulating platforms, the root of the problem lies in the number of willing receivers and disseminators of disinformation present in a divided society.

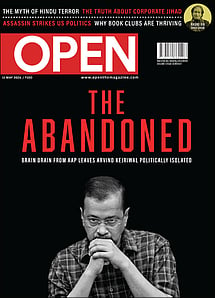

The Abandoned Kejriwal

01 May 2026 - Vol 04 | Issue 69

Brain drain from AAP leaves Arvind Kejriwal politically isolated

Disinformation campaigns are often built around 'an element of truth', according to the European East StratCom Task Force, which was established to fight Russia's disinformation campaigns. To recognise and red-flag the variants of dis- and misinformation, researcher Claire Wardle in 2017 classified these into seven types : satire/parody (no intention to harm but to fool) and those that could harm like misleading content (disingenuously framing an issue/individual), imposter content (impersonating genuine content), fabricated content (deceiving through false content), false connection (videos/ captions/visuals don't support the content), false context (sharing genuine content with false contextual information) and manipulated content (manipulating genuine information/ imagery to deceive). The combination of fiction with facts reinforces the bias of receivers of such information, especially if the sender has the first-mover advantage. This means those who send the information first set the terms of the conversation. The anchoring bias of receivers comes into play here. In India, following the Pulwama attack, disinformation spread around one story was massive, according to Trushar Barot, who leads Facebook's integrity initiatives in India. While the partisan peddlers of disinformation have set shop on Facebook, targeting 36 per cent of the voting-age population, most of whom are from urban areas, WhatsApp, Helo, ShareChat and TikTok are preferred for semi-urban and rural audiences.

Disinformation campaigns are less about moulding mindsets and more about pandering to subterranean biases of the society so that these come out in the open, making unacceptable speech and action acceptable. Social media aids the velocity, virality and volume of divisive and dangerous speech. When online interactions routinise abusive speech, hate becomes a matter of habit. The oft-cited example of people not shouting from the town square the filth they spew online because of the anonymity afforded by social media becomes redundant.

People who are already heavily polarised flock to certain websites regardless of their notoriety for spreading false news.

A 2017 study by William H Dutton et al proved that the fears of online echo chambers and filter bubbles abetting junk news are overstated. People will believe what they want to believe. Citizens were found to be active curators of digital disinformation as per a 2018 study by Yevgeniy Golovchenko, Mareike Hartmann and Rebecca Adler-Nissen, on Russian news outlets RIA Novosti, Sputnik and RT. Further, a 2018 study of Twitter posts by Sinan Aral, Deb Roy and Soroush Vosoughi showed falsehoods were 70 per cent more likely to be tweeted than facts. They found humans and not bots to be primary purveyors of false news. While political astroturfing might be done by seeding false news on social media through commercial botnets (groups of bots), sock-puppet networks and paid human trolls, these are fertilised by people willing to spread such messages. When the online public-private partnership of polarising politics becomes profitable, the enterprise spreads to the offline world.

India is in the midst of the world's largest social media-driven election. Ben Nimmo, a senior fellow at the Atlantic Council's Digital Forensics Research Lab, published a case study on April 8th of pro-BJP and pro-Congress bots deployed on a large scale on February 9th-10th to manipulate Twitter traffic. But the impact of this offensive was less due to the relatively low number of followers for each account. But WhatsApp, despite its many efforts to curb false and hateful content, can be used to reach out to 256 million voters if one calculates one WhatsApp group of 256 members each for the 1 million polling booths. There are reportedly 87,000 groups on WhatsApp dedicated to spreading information to sway voters, which can potentially reach 22 million voters. The recipients of false information can quickly spread it across multiple networks. The legal problem of not just curbing content creators but controlling disseminators of disinformation without violating free-speech norms crops up.

The silencing of political opponents, journalists and individual critics is not done only by governments, as shown by several digital media labs in India, the US and Europe that have been conducting research on malicious bots and trolls. This is a concerted warfare by the online army of data capitalists—that is, those who have the money to maintain monopoly over who gets to speak and who doesn't. When political parties become data capitalists, especially populist parties, which thrive on the 'us'-versus-'them' rhetoric, majoritarian narratives become dominant narratives. The voices tend to be the loudest and brashest on either side of the political spectrum. In her book The Politics of Fear, Professor Ruth Wodak did a discourse analysis of the speeches of right-wing populist parties in Europe and found that they thrived on the 'politics of fear' and the 'arrogance of ignorance'—where straight-talking became a euphemism for no-holds-barred rhetoric and intellectuals, defined as those who held liberal views, were derided as woolly- headed tree-huggers. In my research on social media and politics, I have found an increase in the radical right's capacity to mobilise more individuals, especially in countries with more heterogenous societies. Supporters of right-wing parties tend to follow each other more and are united in their views compared to their liberal counterparts who are fragmented as a group, their refracted views weakening their voices.

While interacting with 300 college-educated social media users in Bengaluru, Delhi and Kolkata as part of my research, I found false information being shared by them due to a messy mix of motivations that included partisan bias, confirmation bias, high appeal of the aesthetics of the message and, quite often, the need to be the first to spread the most eye-catching, humorous or entertaining news. Some were open about spreading the message even after being apprised of it being false. This was because it fed their bias and/or was fun to do so. This is when misinformation shape-shifts into disinformation and services political propaganda campaigns.

Unless we are aware of what scholars Hossein Derakshan and Claire Wardle call the 'information disorder', we won't be able to meaningfully deal with the problems of disinformation, misinformation and propaganda. This involves dealing with three types of bad information: disinformation, misinformation and mal-information (information intended to do harm) at the phases of their creation (by targeting the agent), production (by targeting the message) and distribution (by targeting the interpreter).

While political parties might or might not follow the 48- hour silent period on social media, stopping their supporters from acting as their proxies in spreading false news is extremely difficult. Last-minute aggressive disinformation campaigns can reel in undecided voters. According to a 2018 report of Counterpoint Research, India has around 400-450 million smartphone users, most of whom can be assumed to be part of the 900-million voter base. When this is read alongside the New Delhi-based Digital Empowerment Foundation's statistic that almost 90 per cent of Indians are not digitally literate, the scale and impact of citizen-driven misinformation campaigns can disempower democracy. Strong deterrents are needed. Disinformation needs to be disincentivised economically and politically. It has to be discouraged socially. Digital education is needed to sift out falsities from facts. Political parties and people have to be made accountable for spreading disinformation. Only then can social media-enabled public-private partnership empower a democracy.